Introduction

The rapid adoption of Generative AI (GenAI) in India is more than a technological advancement—it is a social phenomenon reshaping power relations, bureaucratic authority, and governance practices. While AI promises innovation and administrative efficiency, it also raises questions of data privacy, inference risks, and digital sovereignty.

Sociologically, AI can be analyzed through the lens of power, knowledge, and social structures, highlighting how technology intersects with hierarchy, inequality, and governance legitimacy.

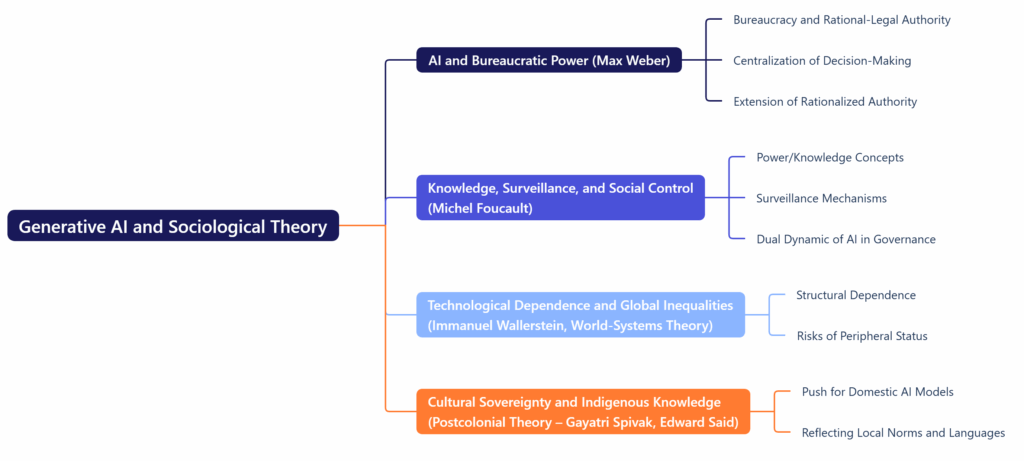

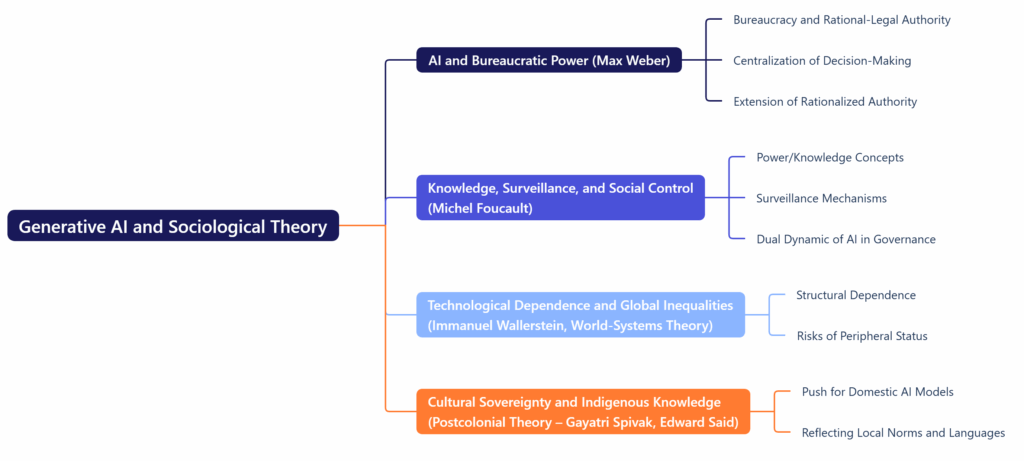

Generative AI and Sociological Theory

- AI and Bureaucratic Power (Max Weber)

- Weber’s theory of bureaucracy and rational-legal authority helps us understand how AI is embedded in Indian governance.

- GenAI tools, when used by senior officials, centralize decision-making power and risk reproducing hierarchical control, as AI can reveal sensitive priorities or strategies—what Weber would call an extension of rationalized authority.

- Knowledge, Surveillance, and Social Control (Michel Foucault)

- Foucault’s concepts of power/knowledge and disciplinary societies illuminate inference risks. AI’s ability to deduce sensitive strategic insights from prompts mirrors surveillance mechanisms, where knowledge becomes a tool of control.

- AI in governance creates a dual dynamic: it can enhance administrative efficiency but also monitor bureaucrats and citizens, raising concerns of social regulation and control.

- Technological Dependence and Global Inequalities (Immanuel Wallerstein, World-Systems Theory)

- India’s reliance on foreign AI platforms reflects structural dependence in the global knowledge economy, where technological dominance is concentrated in a few core nations.

- Wallerstein’s theory explains how developing nations risk peripheral status, with AI dependence reproducing inequalities in knowledge production, innovation, and governance autonomy.

- Cultural Sovereignty and Indigenous Knowledge (Postcolonial Theory – Gayatri Spivak, Edward Said)

- The push for domestic AI models aligns with postcolonial critiques: AI trained on Indian contexts ensures that knowledge and technological outputs reflect local norms, languages, and governance priorities, rather than being shaped by foreign epistemic frameworks.

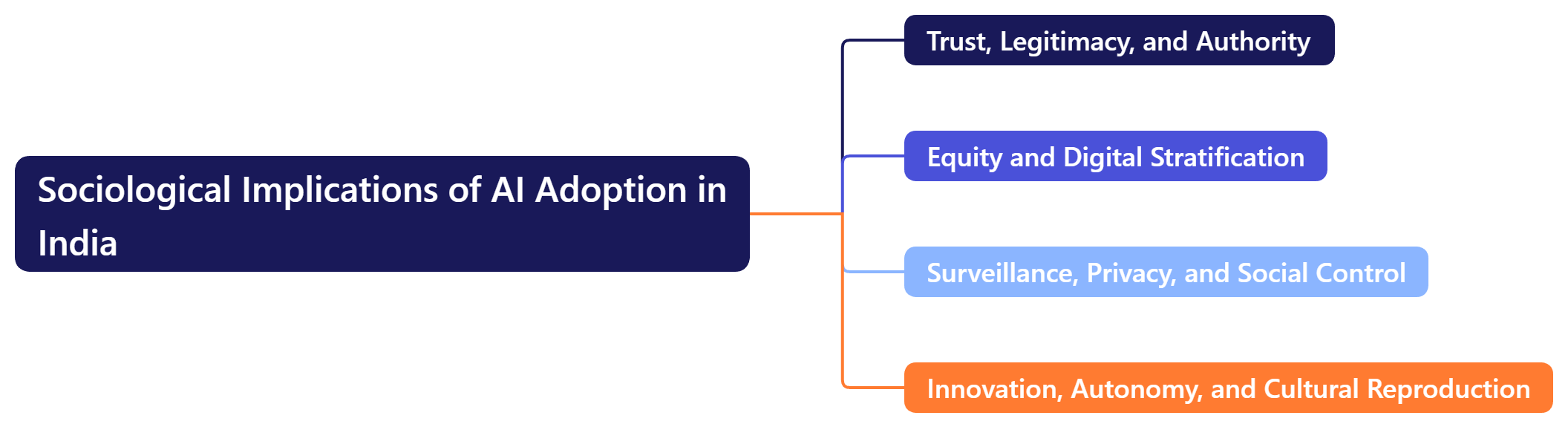

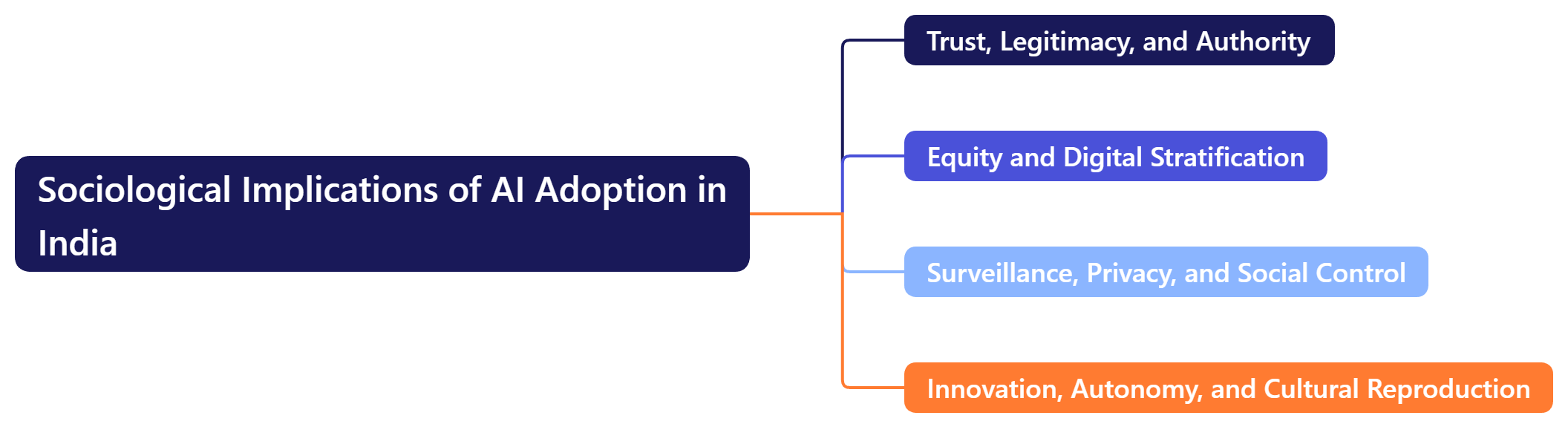

Sociological Implications of AI Adoption in India

- Trust, Legitimacy, and Authority

- AI governance affects social trust in institutions. Over-reliance on foreign platforms can undermine legitimacy, while domestically controlled AI fosters trust and reinforces national sovereignty.

- This aligns with Anthony Giddens’ structuration theory, where technology is both shaped by and shapes institutional rules and social practices.

- Equity and Digital Stratification

- Dependence on foreign AI platforms creates inequalities between technologically empowered institutions and those lacking access, reproducing a new form of social stratification.

- AI development and access, therefore, are not just technical issues but sociological ones, influencing who participates in knowledge creation and decision-making.

- Surveillance, Privacy, and Social Control

- Following Foucault, inference risks are a form of disciplinary surveillance, where AI can monitor bureaucrats’ decision-making patterns or reveal state priorities.

- Ensuring prompt sanitization, secure governance frameworks, and air-gapped systems helps counterbalance this centralization of knowledge and control.

- Innovation, Autonomy, and Cultural Reproduction

- Indigenous AI initiatives, like India’s 12 planned LLMs, act as tools of cultural and epistemic reproduction, ensuring AI reflects Indian law, languages, and governance priorities.

- This resonates with Pierre Bourdieu’s theory of cultural capital, where local knowledge and expertise are institutionalized to maintain social and symbolic power.

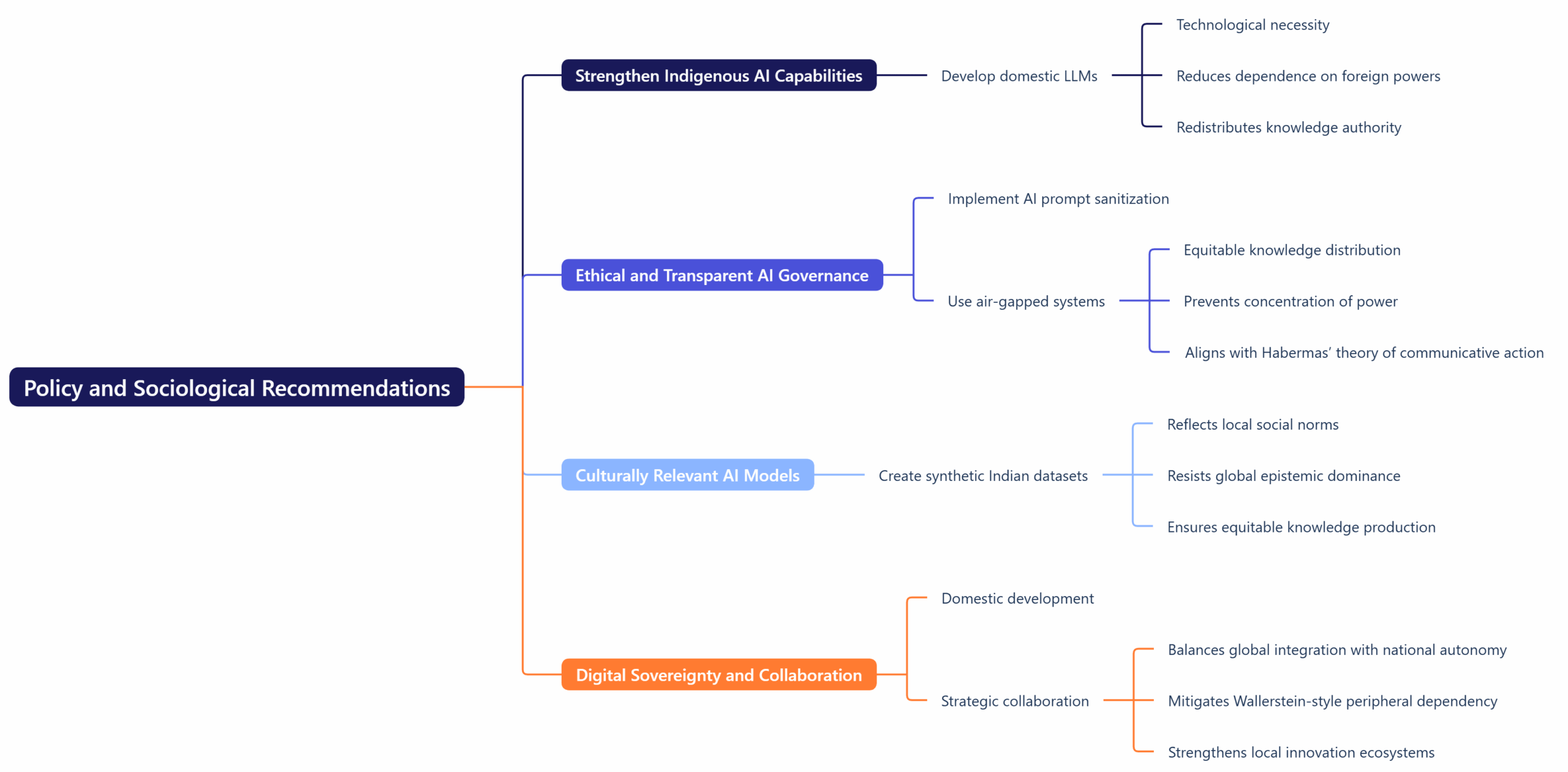

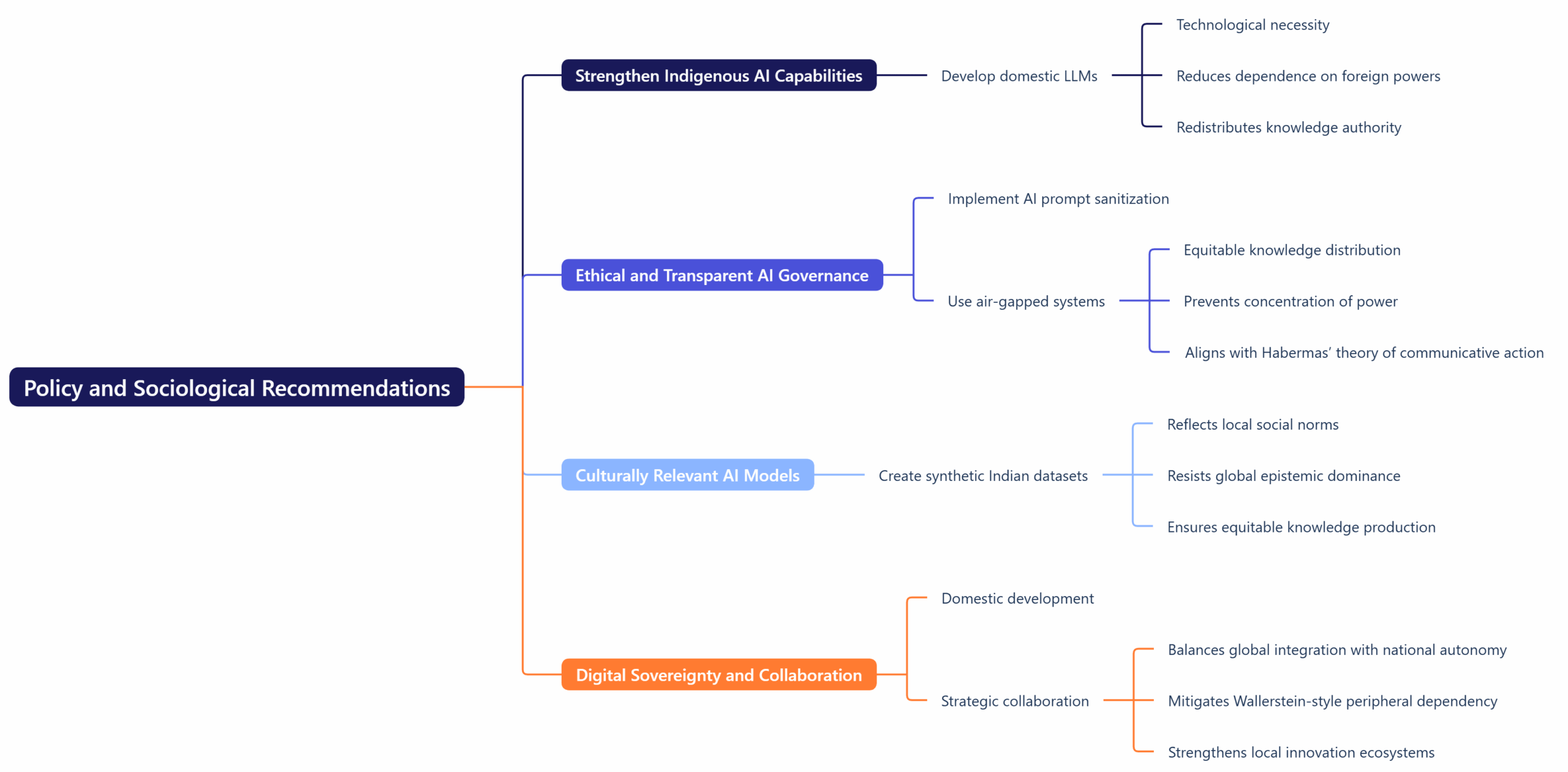

Policy and Sociological Recommendations

- Strengthen Indigenous AI Capabilities

- Developing domestic LLMs is both a technological and sociological necessity, reducing dependence on foreign powers and redistributing knowledge authority to Indian institutions.

- Ethical and Transparent AI Governance

- Implementing AI prompt sanitization and air-gapped systems ensures equitable distribution of knowledge and prevents concentration of power, aligning with Habermas’ theory of communicative action—technology should enable democratic deliberation, not secrecy.

- Culturally Relevant AI Models

- Creating synthetic Indian datasets ensures AI reflects local social norms and legal systems, resisting global epistemic dominance and ensuring equitable knowledge production.

- Digital Sovereignty and Collaboration

- Domestic development combined with strategic collaboration can balance global integration with national autonomy, mitigating Wallerstein-style peripheral dependency while strengthening local innovation ecosystems.

Conclusion

Generative AI in India is more than a technical tool—it is a social institution reshaping bureaucratic power, global inequalities, and knowledge authority. Sociological perspectives illuminate that:

- Technology reproduces and restructures power hierarchies (Weber, Foucault).

- Dependence on foreign platforms reflects structural inequalities (Wallerstein).

- Indigenous development safeguards cultural sovereignty and equitable knowledge production (Spivak, Bourdieu).

To ensure AI promotes inclusive governance, social equity, and national sovereignty, India must prioritize indigenous AI, secure governance frameworks, and ethical oversight, embedding technology within a socially conscious and democratic framework.

|